Tesla AI Day: More Than an Electric Car Company

On August 19, 2021, Tesla held its first AI Day, and it was one of the best technical presentations I have ever watched. What Elon Musk and his engineering team demonstrated that day made one thing clear: Tesla is far more than an electric car company. It is an AI company that happens to make cars.

Key Takeaways

- Tesla AI Day revealed that Tesla is fundamentally an AI company, with an end-to-end stack spanning data collection, auto-labeling, custom training hardware (Dojo), and deployment.

- Tesla's approach converts camera images into vector space representations, enabling the neural network to reason about 3D geometry without lidar or radar.

- The Dojo supercomputer chip targets GPU-level compute with CPU-level connectivity, designed specifically for the massive video training workloads autonomous driving requires.

The Goal: Recruit the Best

The explicit purpose of AI Day was to attract world-class AI engineers. Tesla needs more people to tackle the challenges ahead, and what better way to recruit than to open the hood and show exactly what they are building? The transparency was refreshing. Every slide was dense with real technical detail - no marketing fluff, no hand-waving. Just engineers explaining how things actually work.

How do Tesla's cars see the world?

The heart of the presentation was Tesla's computer vision pipeline - the system that allows the car to perceive and navigate the world autonomously.

From Image Space to Vector Space

One of the most impressive details: Tesla's neural networks predict in vector space, not in image space. Instead of processing each camera frame independently, the system fuses footage from 8 cameras positioned around the vehicle, all pointing in different directions, in real time. This multi-camera fusion creates a unified 3D representation of the environment - a bird's-eye understanding of everything around the car.

This is a fundamentally different approach from processing individual camera feeds. By predicting in vector space, the network reasons about the geometry of the real world rather than the pixels of individual images. The result is a richer, more consistent understanding of lanes, vehicles, pedestrians, and obstacles.

4D Labeling and Auto Labeling

Tesla's data engine is equally impressive. The team discussed their approach to 4D labeling - annotating data across three spatial dimensions plus time. This captures not just where objects are, but how they move and interact over time.

They also detailed their auto labeling pipeline, which uses a more powerful offline model to automatically generate high-quality labels at scale. This is critical when you have a fleet of millions of vehicles collecting data - you cannot label everything by hand. The effort that goes into preparing data that is ready to train the AI model is staggering. The engineers made it clear that data quality and labeling infrastructure are just as important as the model architecture itself.

Simulating the Edge Cases

Perhaps the most underappreciated part of autonomous driving is handling rare and complex scenarios - the situations you almost never encounter but absolutely must get right. Tesla showed how they simulate these edge cases: unusual road geometries, erratic driver behavior, extreme weather conditions, and more. By generating synthetic scenarios, they can train the model on situations that would take years to collect from real-world driving.

What is Tesla's Dojo supercomputer?

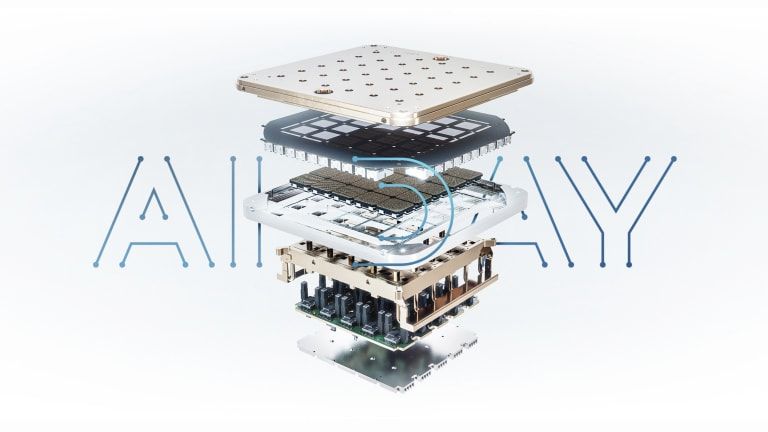

Then came Dojo - Tesla's custom-designed chip for training neural networks. The numbers were staggering:

- 7nm technology

- GPU-level compute with CPU-level connectivity

- Massive I/O bandwidth that allows chips to tile together into a seamless training fabric

Dojo is Tesla's answer to the compute bottleneck. Training autonomous driving models on petabytes of video data requires purpose-built hardware, and Tesla decided to build it themselves rather than rely on off-the-shelf GPUs. The chip is designed from the ground up for the specific workloads that autonomous driving demands - a bold and expensive bet that signals how seriously Tesla takes the compute side of the AI problem.

The Tesla Bot

The presentation ended with a surprise: the Tesla Bot - a humanoid robot designed to handle boring, repetitive, and dangerous tasks that humans would rather avoid.

The idea is that the same AI and computer vision systems powering autonomous driving can be generalized to a humanoid form factor. If you can teach a car to navigate a complex, unpredictable world, you can apply similar principles to a robot navigating a warehouse or a factory floor.

And in case you were worried about a robot uprising - Musk reassured the audience that you can outwrestle it or simply outrun it, since its top speed is capped at 5 mph (about 8 km/h).

Why This Matters Beyond Self-Driving

What struck me most about AI Day was not any single announcement, but the breadth of innovation on display. Everywhere you looked, it was either state of the art, a step beyond that, or an entirely new way of doing things. The innovations in computer vision, data labeling, simulation, and custom silicon are not limited to autonomous driving. These are general-purpose capabilities that can be applied across robotics, manufacturing, healthcare, and beyond.

Tesla is building an end-to-end AI stack - from data collection to labeling to training infrastructure to deployment - and the implications extend far beyond putting a car on autopilot.

Final Thoughts

I did not even scratch the surface of everything discussed at Tesla AI Day. If you are an AI enthusiast, I would strongly recommend watching the full presentation on Tesla's official YouTube channel. The depth of technical detail is rare for a corporate event, and it offers a fascinating look at where autonomous AI systems are heading.

Plus, you will get to enjoy a few signature Elon moments along the way.

Written by